Seems like just a couple of weeks ago that I was talking about the great American Eclipse of 8th April 2024 and how the weather in Greenville, Texas just cleared enough for me and my family to see a good show. (See my post of 20 April 2024) Well this past weekend the Solar System decided to stage another celestial event as a massive solar flare erupted in a Coronal Mass Ejection (CME) that passed by the Earth producing the biggest display of the Northern Lights, also known as the Aurora Borealis seen in decades.

So what’s going on here? Why is the Sun so active this year that astronomers were predicting that the Corona during last month’s eclipse would be much bigger and more active than during the eclipse of 2017? Why does the Sun have so many sunspots this year, and what are sunspots anyway? And what do sunspots have to do with the Aurora anyway?

Let’s take this one step at a time. First of all it was Galileo who discovered the fact that our Sun is often covered with dark spots, the ancient Greeks had believed that the Sun was a perfect, unblemished disk. While scientists quickly realized that sunspots are areas of the Sun’s surface that are slightly cooler than the regions around them they are actually quite bright, they only appear dark in comparison to the normal brightness of the Sun’s surface.

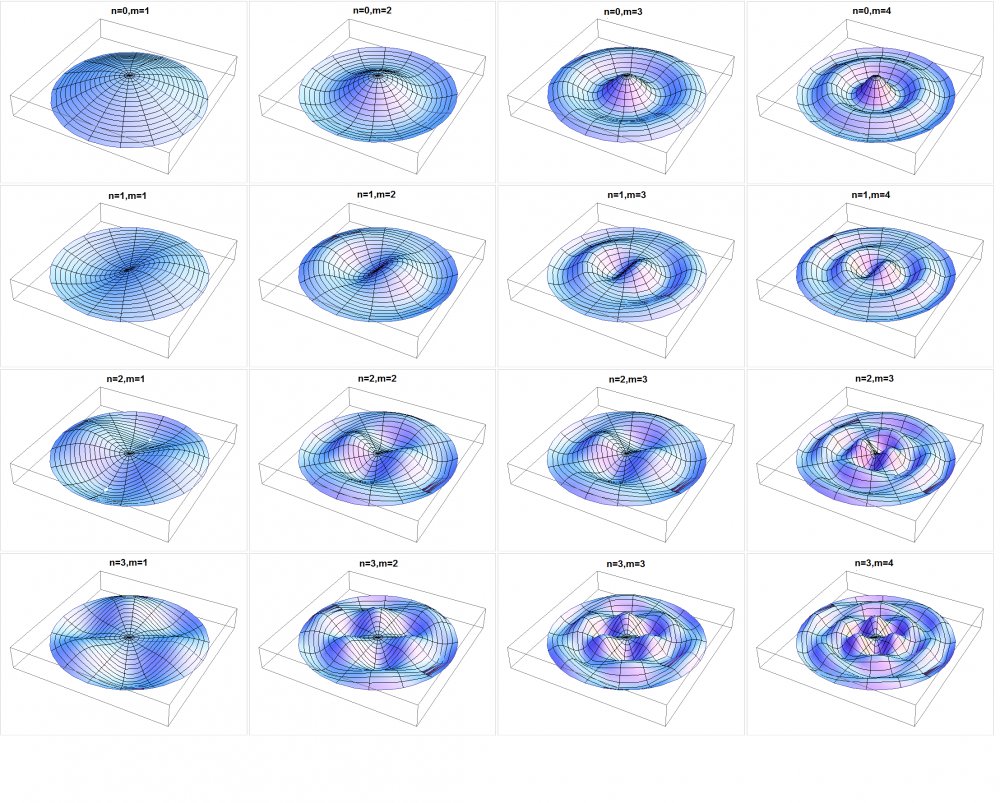

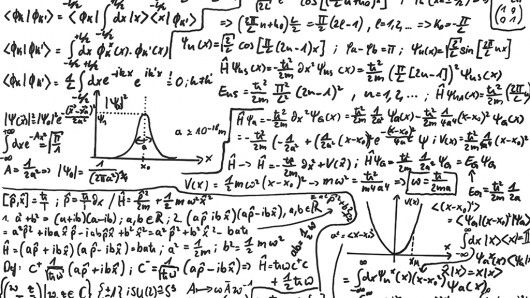

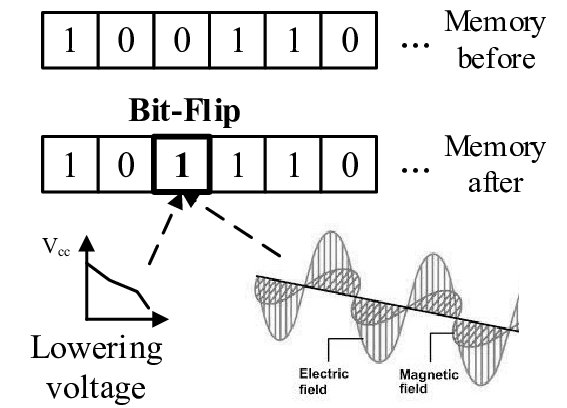

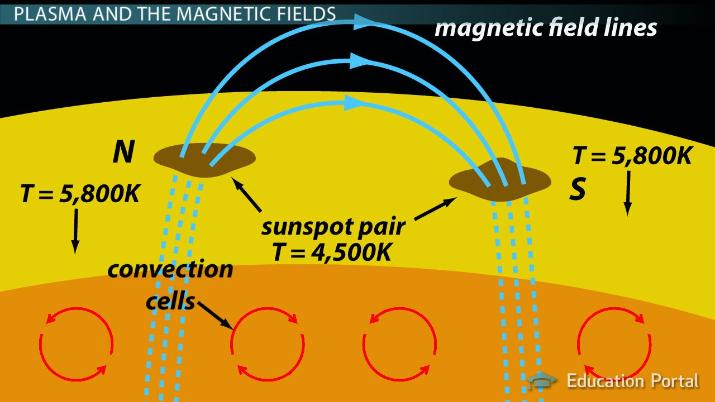

It took several hundred years for scientists to understand that sunspots are caused by the Sun’s magnetic field that, like the Earth’s is strongest near the Sun’s north and south poles. Unlike the Earth however, which is partly solid and partly liquid the Sun is a huge ball of ionized gas so that inside the Sun the magnetic field gets all twisted around itself. Because of this the magnetic field can break onto the Sun’s surface at places other than the poles. When this happens the magnetic field causes the gasses on the surface to expand and cool down, generating a sunspot.

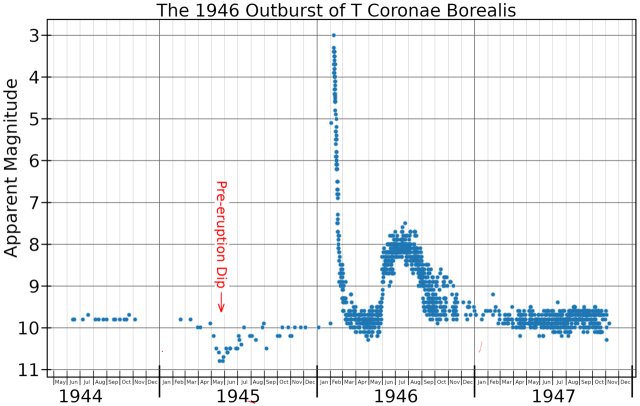

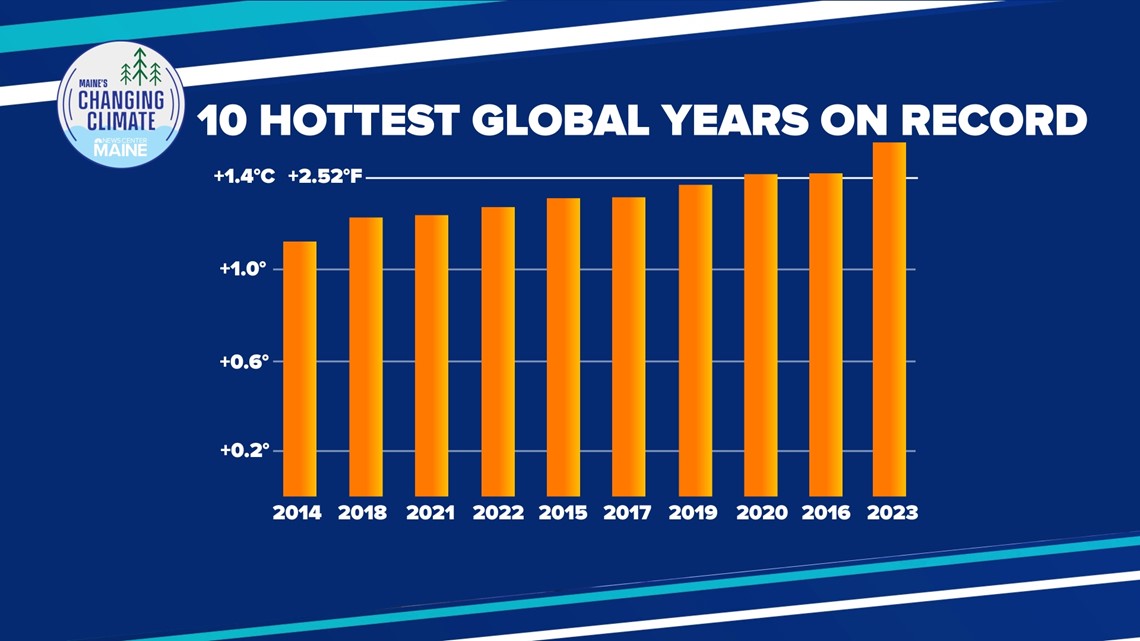

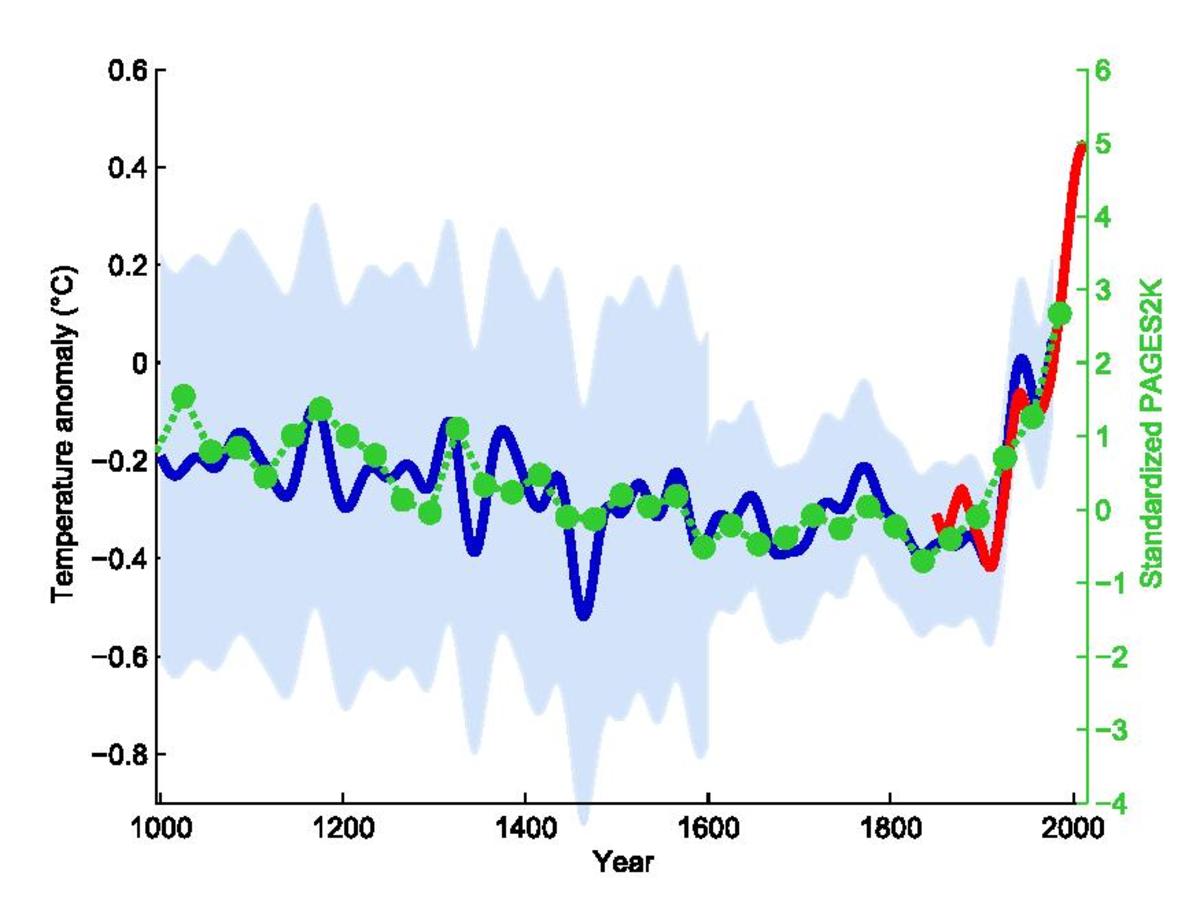

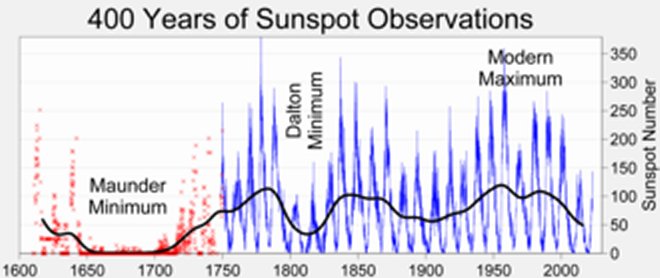

It’s also been recognized for several hundred years that the Sun has an approximately eleven-year sunspot ‘cycle’. That is to say that in some years the Sun will have a very large number of sunspots, solar maximum, this year is going to be one of those years. Then five or six years later there will be a minimum number of sunspots, during the last solar minimum in 2019 the Sun went 281 days without a single sunspot on its surface. Then five or six years after that there will be another sunspot maximum. In 2023 and so far in 2024 there has been at least one sunspot on the Sun’s surface at all times. Why the Sun should have a sunspot cycle and why it should be eleven years is still poorly understood as are a great many things about our local star.

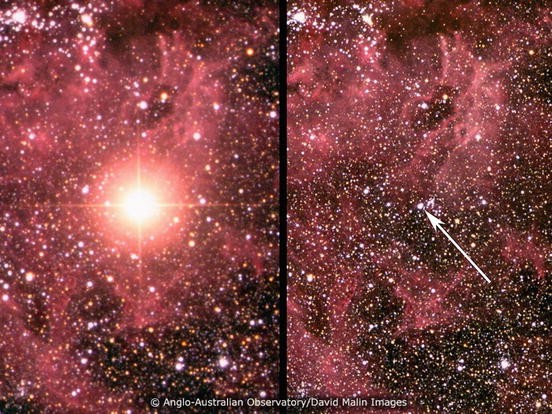

As I said sunspots happen when the twisting and turning of the Sun’s magnetic field breaks the surface and so sunspots are anything but stable objects, growing and shrinking in size, changing shape while moving closer or further apart. There are a large number of astronomers and physicists who have spent their entire careers studying the behavior of sunspots and one thing that they’ve learned is that there is an extraordinary amount of energy in those magnetic twists and turns. Then, if the magnetic field lines become too tangled they can snap releasing that energy in an explosion so powerful it makes a hydrogen bomb look like a firecracker.

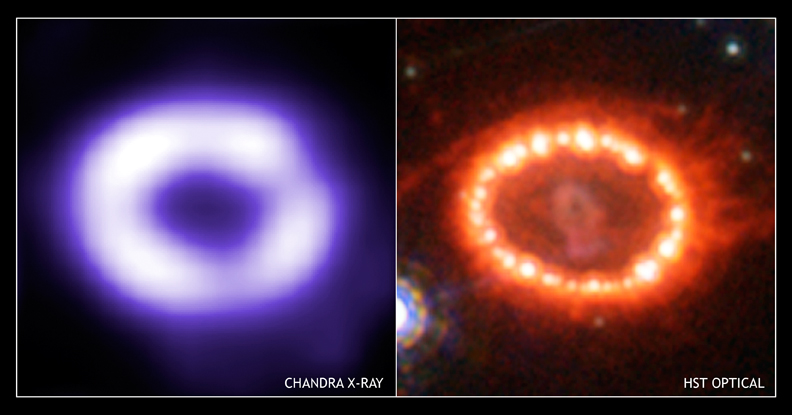

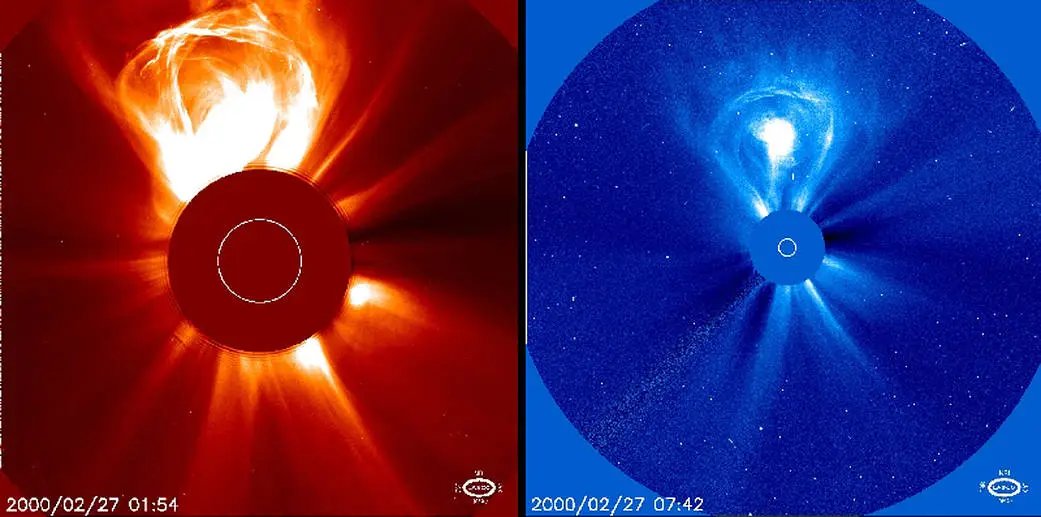

Those explosions around sunspots are known as solar flares where matter from the Sun’s surface erupts tens of thousands of kilometers into space. Occasionally solar flares can be so powerful that matter, and we’re talking about millions of tonnes of matter, is ejected from the Sun and into space creating a ‘Coronal Mass Ejection’ or CME.

That’s what happened to sunspot AR3664 on the 8th of May when it produced the largest CME observed since at least 2005, measuring at X5.8 on the scale solar astronomers use. AR3664 it itself a monster, one of the largest sunspots ever seen being about as large as 17 Earths laid side by side, so large in fact that it is one of the biggest sunspots ever seen. When it erupted AR3664 wasn’t quite pointed right at the Earth but that CME was so huge that it still hit our planet in two waves on the nights of May 11th and 12th moving at a speed in excess of 600 km per second.

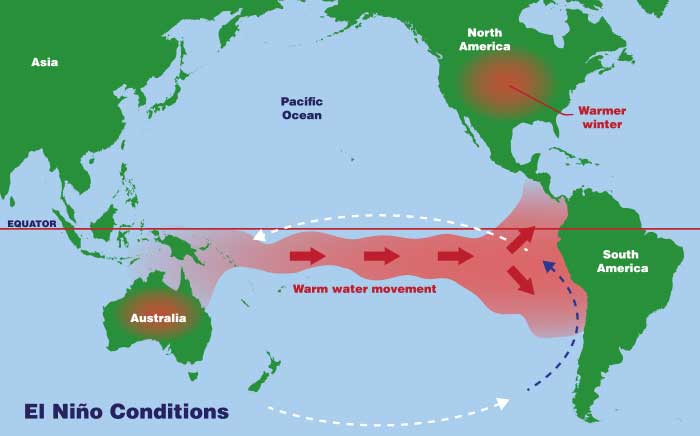

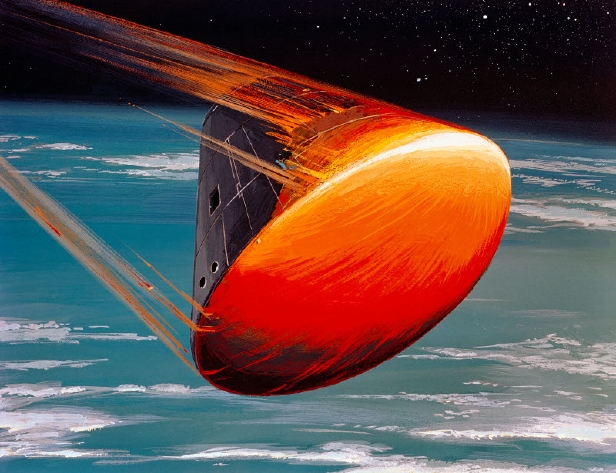

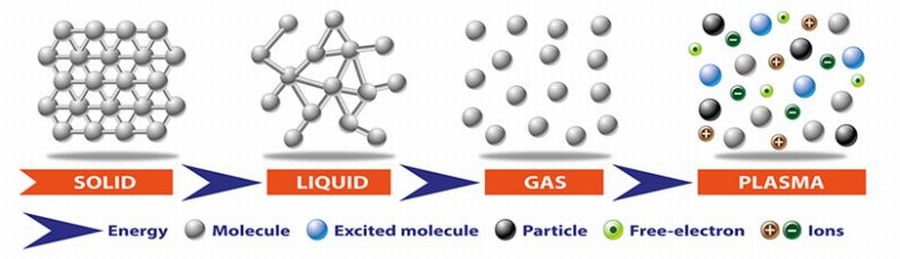

Now the matter in a CME, like most of the Sun’s material isn’t either a solid, liquid or a gas like the matter here on Earth, it’s far too hot for that. Instead it’s mostly just a huge cloud of Protons and Electrons that’s called a plasma. As everyone knows protons and electrons are charged particles so that when those particles come near the Earth they are deflected by the Earth’s magnetic field towards our planet’s poles before finally striking the atmosphere. So it’s the Earth’s magnetic field that normally keeps the aurora at our planet’s polar regions.

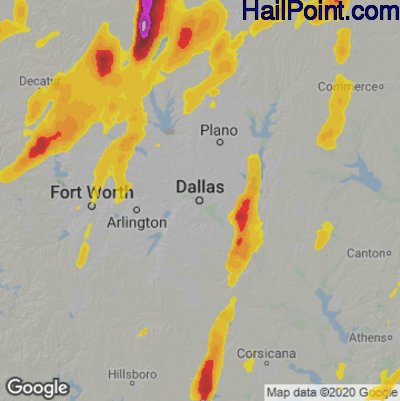

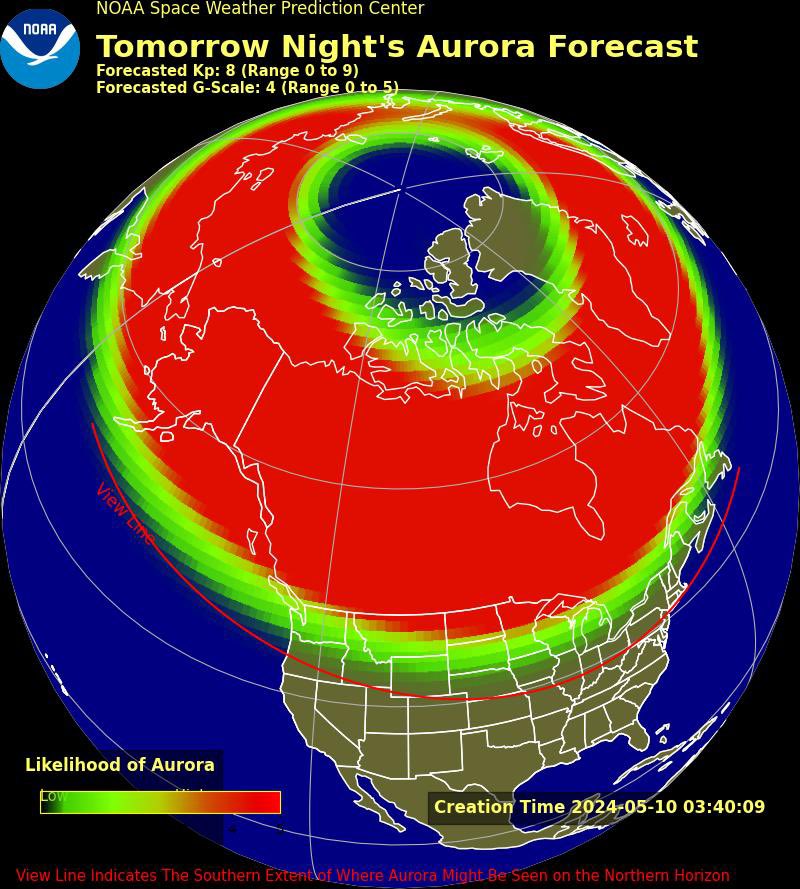

As they enter the atmosphere the charged protons and electrons collide with gas molecules, which you’ll recall are mostly Nitrogen and Oxygen. The collisions break those molecules into their separate atoms, which then recombine giving off visible light in the process. It is this light that creates the dancing streaks of the Aurora. So powerful was the geomagnetic storm generated by the X5.8 flare that the aurora it created pushed out from the polar regions reaching so far south that it was even observed by people living in northern Florida.

Here in Philadelphia I should have had a great chance to finally see this natural phenomenon, but to quote an old song “clouds got in my way!” Both nights that the aurora was at its maximum the Delaware valley was treated to a light, continuous rainstorm but more importantly we were blanketed by a thick layer of stratus clouds making it impossible to see any part of the sky.

Still, solar maximum isn’t over yet and astronomers think that anything could happen in the next 4-6 months. So to those of you who managed to see the Aurora Borealis on the nights of May 11th or 12th I envy you but I haven’t given up my hope of seeing them yet.